Table Of Content

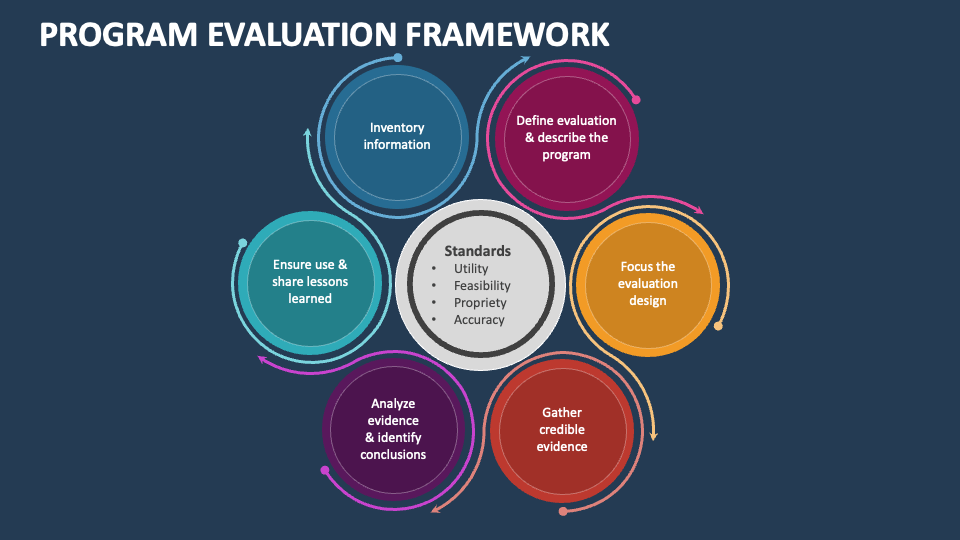

However, the practical approach endorsed by this framework focuses on questions that can improve the program. This framework also makes it possible to respond to common concerns about program evaluation. For instance, many evaluations are not undertaken because they are seen as being too expensive. The cost of an evaluation, however, is relative; it depends upon the question being asked and the level of certainty desired for the answer. A simple, low-cost evaluation can deliver information valuable for understanding and improvement.

Design, synthesis and biological evaluation of peptidomimetic benzothiazolyl ketones as 3CLpro inhibitors against ... - ScienceDirect.com

Design, synthesis and biological evaluation of peptidomimetic benzothiazolyl ketones as 3CLpro inhibitors against ....

Posted: Tue, 05 Sep 2023 07:00:00 GMT [source]

Phases and core elements of complex intervention research

By focusing the evaluation design, we mean doing advance planning about where the evaluation is headed, and what steps it will take to get there. It isn't possible or useful for an evaluation to try to answer all questions for all stakeholders; there must be a focus. It reveals assumptions about conditions for program effectiveness and provides a frame of reference for one or more evaluations of the program. A detailed logic model can also be a basis for estimating the program's effect on endpoints that are not directly measured.

Resources

In addition, the recognition of teledentistry benefits can enhance awareness and encourage its adoption and implementation, which could be explained by the technology acceptance model51. Therefore, a gamified online role-play with a robust design and implementation appeared to have potential in enhancing self-perceived confidence and awareness in the use of teledentistry. But the idea of informed evaluation design, or the strategic mixing of methods applies to essentially all evaluations. According to Bamberger (2012, 1), “Mixed methods evaluations seek to integrate social science disciplines with predominantly quantitative and predominantly qualitative approaches to theory, data collection, data analysis and interpretation. Although it is difficult to truly integrate different methods within a single evaluation design, the benefits of mixed methods designs are worth pursuing in most situations. The benefits are not just methodological; through mixed designs and methods, evaluations are better able to answer a broader range of questions and more aspects of each question.

How to Formplus Online Form Builder for Evaluation Survey

Quantity affects the level of confidence or precision users can have - how sure we are that what we've learned is true. In the course of an evaluation, indicators may need to be modified or new ones adopted. Also, measuring program performance by tracking indicators is only one part of evaluation, and shouldn't be confused as a basis for decision making in itself. There are definite perils to using performance indicators as a substitute for completing the evaluation process and reaching fully justified conclusions. For example, an indicator, such as a rising rate of unemployment, may be falsely assumed to reflect a failing program when it may actually be due to changing environmental conditions that are beyond the program's control. One way to develop multiple indicators is to create a "balanced scorecard," which contains indicators that are carefully selected to complement one another.

Design, synthesis, and biological evaluation of gambogenic acid derivatives: Unraveling their anti-cancer effects by ... - ScienceDirect.com

Design, synthesis, and biological evaluation of gambogenic acid derivatives: Unraveling their anti-cancer effects by ....

Posted: Tue, 06 Feb 2024 17:55:22 GMT [source]

Applying the framework: Conducting optimal evaluations

Programme theory describes how an intervention is expected to lead to its effects and under what conditions. Although it may seem obvious that evaluation design should be matched to the evaluation questions, in practice much evaluation design is still too often methods driven. Evaluation professionals have implicit and explicit preferences and biases toward the approaches and methods they favor. The rise in randomized experiments for causal analysis is largely the result of a methods-driven movement. Although this guide is not the place to discuss whether methods-driven evaluation is justified, there are strong arguments against it. One such argument is that in IEOs (and in many similar institutional settings), one does not have the luxury of being too methods driven.

Take into account the following important factors when developing an evaluation design

An interrupted time series used repeated measures before and after delayed implementation of the independent variable (e.g., the program, etc.) to help rule out other explanations. This relatively strong design – with comparisons within the group – addresses most threats to internal validity. These are threats (or alternative explanations) to your claim that what you did caused changes in the direction you were aiming for. They are generally posed by factors operating at the same time as your program or intervention that might have an effect on the issue you’re trying to address.

Case studies involve in-depth research into a given subject, to understand its functionalities and successes. Summative evaluation allows the organization to measure the degree of success of a project. Such results can be shared with stakeholders, target markets, and prospective investors. CDC Evaluation Resources provides a list of resources for evaluation, as well as links to professional associations and journals. The formality of the agreement depends upon the relationships that exist between those involved.

Among the issues to consider when focusing an evaluation are:

Attribution would focus on the arrows between specific activities/outputs and specific outcomes—whether progress on the outcome is related to the specific activity/output. A feedback system was carefully considered and implemented into the gamified online role-play. Immediate feedback appears to be a key feature of interactive learning environments29. Formative feedback was instantly delivered to learners through verbal and non-verbal communication, including words (content), tone of voice, facial expressions, and gestures of the simulated patient.

The 30 more specific standards are grouped into four categories:

The amount and type of stakeholder involvement will be different for each program evaluation. For instance, stakeholders can be directly involved in designing and conducting the evaluation. They can be kept informed about progress of the evaluation through periodic meetings, reports, and other means of communication. These standards help answer the question, "Will this evaluation be a 'good' evaluation?" They are recommended as the initial criteria by which to judge the quality of the program evaluation efforts. Over the past years, there has been a growing trend towards the better use of evaluation to understand and improve practice.The systematic use of evaluation has solved many problems and helped countless community-based organizations do what they do better. Around the world, there exist many programs and interventions developed to improve conditions in local communities.

Overall, it is important to find a consultant whose approach to evaluation, background, and training best fit your program’s evaluation needs and goals. Be sure to check all references carefully before you enter into a contract with any consultant. Program staff may be pushed to do evaluation by external mandates from funders, authorizers, or others, or they may be pulled to do evaluation by an internal need to determine how the program is performing and what can be improved.

At the same time, if the evaluation has to do with the assessment of a product that has been on the market for some time, the focus is on using historical data to determine how well the market performance is living up to previous expectations. The timing for the evaluation design will often impact what data is used as part of the process and even how that data is interpreted. Based on the qualitative findings, a conceptual framework was developed in which a gamified online role-play was conceptualized as a learning strategy in supporting learners to be able to implement teledentistry in their clinical practice (Fig. 4).

Before finalizing the design, it can be helpful to have a technical review of it by one or more independent evaluators. It might be necessary to involve more than one reviewer in order to provide expert advice on the specific methods proposed, including specific indicators and measures to be used. Ensure that the reviewer is experienced in using a range of methods and designs, and well briefed on the context, to ensure they can provide situation specific advice. Subsequently, arrange for a technical review of the evaluation design and arrange for a review of the design by the evaluation management structure (e.g., steering committee).

No comments:

Post a Comment